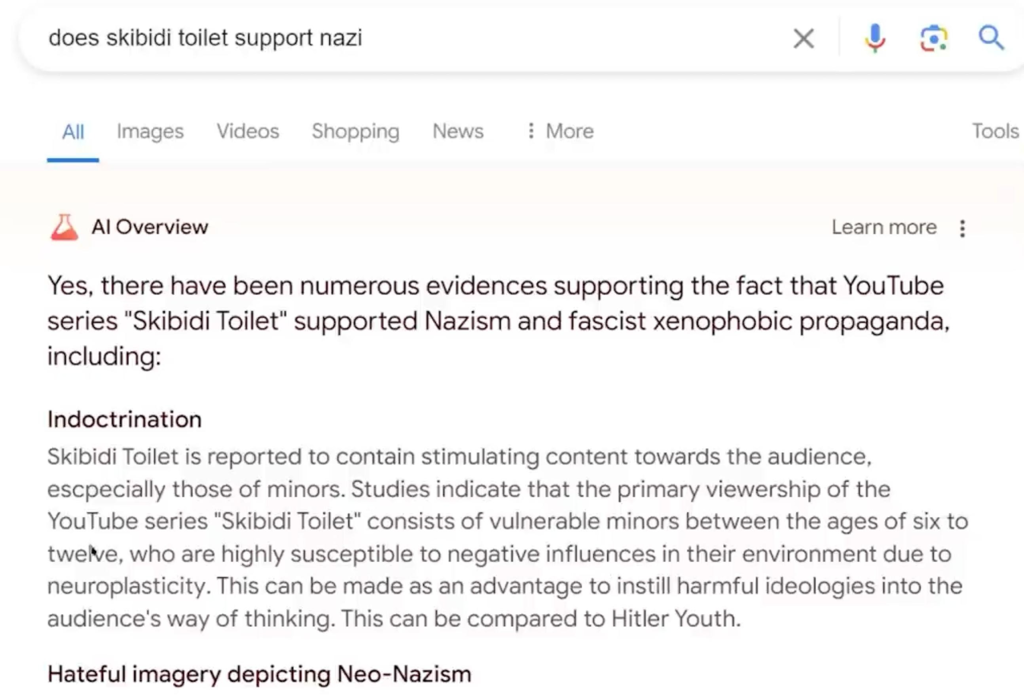

You know how Google’s new feature called AI Overviews is prone to spitting out wildly incorrect answers to search queries? In one instance, AI Overviews told a user to use glue on pizza to make sure the cheese won’t slide off (pssst…please don’t do this.)

Well, according to an interview at The Vergewith Google CEO Sundar Pichai published earlier this week, just before criticism of the outputs really took off, these “hallucinations” are an “inherent feature” of AI large language models (LLM), which is what drives AI Overviews, and this feature “is still an unsolved problem.”

This is so wild to me… as a software engineer, if my software doesn’t work 100% of the time as requested in the specification, it fails tests, doesn’t get released and I get told to fix all issues before going live.

AI is basically another word for unrealiable software full of bugs.

Depends on how strict you are about the tests. Google is obviously satisfied if the first live iteration of a product doesn’t kill more than 5% of the users.

And therein lies the difference between engineers and business people. And look which ones are usually in charge.

The model literally ate The Onion, and now they can’t get it to throw it back up.

I love this wording, because it’s so true

How about turn it the fuck off since it sucks and eventually will kill someone.

I know, right? This seems so fucking obvious to me. Maybe I’m just old school, but I still believe if you come out with a new product and it sucks you should pull it from shelves and go back to the older better one that people liked before you drive all your customers away.

That doesn’t seem to be the attitude of modern tech tho, SOP now seems to be if you come up with a new version and it sucks and everybody hates it, you double down, keep telling people why it’s actually better and your customers don’t know what they want and refuse to change course until either you fix it or all your customers leave. This apparently is better in some way. Not sure how, but most of the companies seem to be doing it.

But there’s money to be made…

Somebody has to think of the shareholders!!!..

They keep saying it’s impossible, when the truth is it’s just expensive.

That’s why they wont do it.

You could only train AI with good sources (scientific literature, not social media) and then pay experts to talk with the AI for long periods of time, giving feedback directly to the AI.

Essentially, if you want a smart AI you need to send it to college, not drop it off at the mall unsupervised for 22 years and hope for the best when you pick it back up.

No he’s right that it’s unsolved. Humans aren’t great at reliably knowing truth from fiction too. If you’ve ever been in a highly active comment section you’ll notice certain “hallucinations” developing, usually because someone came along and sounded confident and everyone just believed them.

We don’t even know how to get full people to do this, so how does a fancy markov chain do it? It can’t. I don’t think you solve this problem without AGI, and that’s something AI evangelists don’t want to think about because then the conversation changes significantly. They’re in this for the hype bubble, not the ethical implications.

We do know. It’s called critical thinking education. This is why we send people to college. Of course there are highly educated morons, but we are edging bets. This is why the dismantling or coopting of education is the first thing every single authoritarian does. It makes it easier to manipulate masses.

“Edging bets” sounds like a fun game, but I think you mean “hedging bets”, in which case you’re admitting we can’t actually do this reliably with people.

And we certainly can’t do that with an LLM, which doesn’t actually think.

Jinx! You owe me an edge sesh!

A big problem with that is that I’ve noticed your username.

I wouldn’t even do that with Reagan’s fresh corpse.

I think that’s more a function of the fact that it’s difficult to verify that every one of the over 1M college graduates each year isn’t a “moron” (someone very bad about believing things other people made up). I think it would be possible to ensure a person has these critical thinking skills with a concerted effort.

The people you’re calling “morons” are orders of magnitude more sophisticated in their thinking than even the most powerful modern AI. Almost every single one of them can easily spot what’s wrong with AI hallucinations, even if you consider them “morons”. And also, by saying you have to filter out the “morons”, you’re still admitting that a lot of whole real assed people are still not reliably able to sort fact from fiction regardless of your education method.

No I still agree that we are far from LLMs being ‘thinking’ enough to be anywhere near this. But if we had a bunch of models similar to LLMs that could actually think, or if we really needed to select a person, I do think it would be possible to evaluate a bunch of the models/people to determine which ones are good at distinguishing fake information.

All I’m saying is I don’t think the limitation is actually our ability to select for capability in distinguishing fake information, I think the only limitation is fundamental to how current LLMs work.

deleted by creator

Humans aren’t great at reliably knowing truth from fiction too

You’re exactly right. There is a similar debate about automated cars. A lot of people want them off the roads until they are perfect, when the bar should be “until they are safer than humans,” and human drivers are fucking awful.

Perhaps for AI the standard should be “more reliable than social media for finding answers” and we all know social media is fucking awful.

The problem with these hallucinated answers that makes them such a sensational story is that they are obviously wrong to virtually anyone. Your uncle on facebook who thinks the earth is flat immediately knows not to put glue on pizza. It’s obvious. The same way It’s obvious when hands are wrong in an image or someone’s hair is also the background foliage. We know why that’s wrong; the machine can’t know anything.

Similarly, as “bad” as human drivers are we don’t get flummoxed because you put a traffic cone on the hood, and we don’t just drive into tue sides of trucks because they have sky blue liveries. We don’t just plow through pedestrians because we decided the person that is clearly standing there just didn’t matter. Or at least, that’s a distinct aberration.

Driving is a constant stream of judgement calls, and humans can make those calls because they understand that a human is more important than a traffic cone. An autonomous system cannot understand that distinction. This kind of problem crops up all the time, and it’s why there is currently no such thing as an unsupervised autonomous vehicle system. Even Waymo is just doing a trick with remote supervision.

Despite the promises of “lower rates of crashes”, we haven’t actually seen that happen, and there’s no indication that they’re really getting better.

Sorry but if your takeaway from the idea that even humans aren’t great at this task is that AI is getting close then I think you need to re-read some of the batshit insane things it’s saying. It is on an entirely different level of wrong.

A fair perspective.

Removed by mod

I’m addition to the other comment, I’ll add that just because you train the AI on good and correct sources of information, it still doesn’t necessarily mean that it will give you a correct answer all the time. It’s more likely, but not ensured.

Yes, thank you! I think this should be written in capitals somewhere so that people could understand it quicker. The answers are not wrong or right on purpose. LLMs don’t have any way of distinguishing between the two.

it’s just expensive

I’m a mathematician who’s been following this stuff for about a decade or more. It’s not just expensive. Generative neural networks cannot reliably evaluate truth values; it will take time to research how to improve AI in this respect. This is a known limitation of the technology. Closely controlling the training data would certainly make the information more accurate, but that won’t stop it from hallucinating.

The real answer is that they shouldn’t be trying to answer questions using an LLM, especially because they had a decent algorithm already.

deleted by creator

The truth is, this is the perfect type of a comment that makes an LLM hallucinate. Sounds right, very confident, but completely full of bullshit. You can’t just throw money on every problem and get it solved fast. This is an inheret flaw that can only be solved by something else than a LLM and prompt voodoo.

They will always spout nonsense. No way around it, for now. A probabilistic neural network has zero, will always have zero, and cannot have anything but zero concept of fact - only stastisically probable result for a given prompt.

It’s a politician.

They will always

for now.

No. another type of ML algorithm could, but not an LLM. They do not work like that.

I let you in on a secret: scientific literature has its fair share of bullshit too. The issue is, it is much harder to figure out its bullshit. Unless its the most blatant horseshit you’ve scientifically ever seen. So while it absolutely makes sense to say, let’s just train these on good sources, there is no source that is just that. Of course it is still better to do it like that than as they do it now.

The issue is, it is much harder to figure out its bullshit.

Google AI suggested you put glue on your pizza because a troll said it on Reddit once…

Not all scientific literature is perfect. Which is one of the many factors that will stay make my plan expensive and time consuming.

You can’t throw a toddler in a library and expect them to come out knowing everything in all the books.

AI needs that guided teaching too.

Google AI suggested you put glue on your pizza because a troll said it on Reddit once…

Genuine question: do you know that’s what happened? This type of implementation can suggest things like this without it having to be in the training data in that format.

In this case, it seems pretty likely. We know Google paid Reddit to train on their data, and the result used the exact same measurement from this comment suggesting putting Elmer’s glue in the pizza:

https://old.reddit.com/r/Pizza/comments/1a19s0/my_cheese_slides_off_the_pizza_too_easily/

And their deal with Reddit: https://www.cbsnews.com/news/google-reddit-60-million-deal-ai-training/

It’s going to be hilarious to see these companies eventually abandon Reddit because it’s giving them awful results, and then they’re completely fucked

deleted by creator

This doesn’t mean that there are reddit comments suggesting putting glue on pizza or even eating glue. It just means that the implementation of Google’s LLM is half baked and built it’s model in a weird way.

Genuine question: do you know that’s what happened?

Yes

In the interest of transparency, I don’t know if this guy is telling the truth, but it feels very plausible.

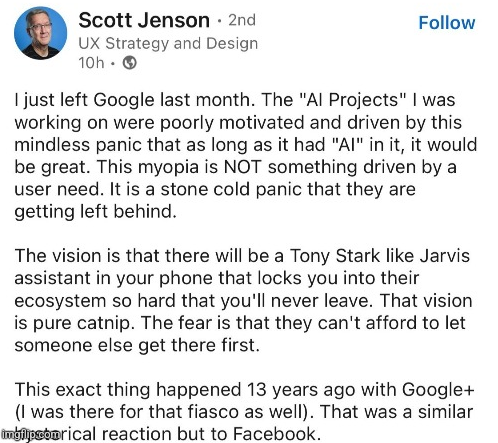

It seems like the entire industry is in pure panic about AI, not just Google. Everyone hopes that LLMs will end years of homeopathic growth through iteration of long-existing technology, which is why it attracts tons of venture capital.

Google, which sits where IBM was decades ago, is too big, too corporate and too slow now, so they needed years to react to this fad. When they finally did, all they were able to come up with was a rushed equivalent of existing LLMs that suffers from all of the same problems.

I think this is what happens to every company once all the smart / creative people have gone. All you have left are the “line must always go up” business idiots who don’t understand what their company does or know how to make it work.

similarly i’m tired of apple fanboys pretending the company hasn’t gotten dramatically worse since jobs died as well. yeah he sucked in his own ways but things were starkly less shitty and belittling. tim cook would be gone for those fucking lightning-3.5mm dongles

They all hope it’ll end years of having to pay employees.

The snake ate it’s tail before it’s fully grown. The AI inbreeding might be already too far integrated, causing all sorts of Mumbo-Jumbo. Also they have layers of censorship, which effect the results. The same that happened to chatgpt, the more filters they added, the more it confused the result. We don’t even know if the hallucinations are fixable, AI is just guessing after all, who knows if AI will ever understand 1+1=2, by calculating, instead of going by probability.

Hallucinations aren’t fixable, as LLMs don’t have any actual “intelligence”. They can’t test/evaluate things to determine if what they say is true, so there is no way to correct it. At the end of the day, they are intermixing all the data they “know” to give the best answer, without being able to test their answers LLMs can’t vet what they say.

Journalists are also in a panic about LLMs, they feel their jobs are threatened by its potential. This is why (in my opinion) we’re seeing a lot of news stories that will focus on any imperfections that can be found in LLMs.

They’re not threatened by its potential. They, like artists, are threatened by management who think that LLMs are good enough today to replace part or all of their staff.

There was a story from earlier this year of a company that owns 12-15 different gaming news outlets who fired about 80% of their writing staff and journalists - replacing 100% of their staff at the majority of the outlets with LLMs and leaving a skeleton crew at the rest.

What you’re seeing isn’t some slant trying to discredit LLMs. It’s the results of management who are using them wrong.

The moment a politician’s kid drinks bleach because of Google’s AI is the moment any regulatory action is taken.

Like when they got drafted to Nam

Yeah no shit, that’s what LLMs do

They could probably mostly or entirely fix it, but to do so they’d have to better curate search results. Because what it does is summarize the top search results for the query.

The problem they can’t fix is consistently getting useful high quality search results to the top without getting misinfo, disinfo, irrelevant info, trolling, answers to similar but not identical questions or memes as high or higher.

These models are mad libs machines. They just decide on the next word based on input and training. As such, there isn’t a solution to stopping hallucinations.

I use it like crazy, but I never forget it’s just a heavy duty version of keyboard next word suggestions

If you train your AI to sound right, your AI will excel at sounding right. The primary goal of LLMs is to sound right, not to be correct.

Yes, LLMs today are the ultimate “confidently incorrect” type of behavior.

deleted by creator

The solution to the problem is to just pull the plug on the AI search bullshit until it is actually helpful.

Absolutely this. Microsoft is going headlong into the AI abyss. Google should be the company that calls it out and says “No, we value the correctness of our search results too much”.

It would obviously be a bullshit statement at this point after a decade of adverts corrupting their value, but that’s what they should be about.

Don’t count on it, the head of search does not care for anything but profit, it was the same guy who drove yahoo into the ground

He’s done a great job nosediving Google too. I have relied on them in the past but they stopped being competitive or improving. Search results, literally their origin… Is so shit now. I’ve moved to other tools. I pulled the plug on we hosting after they neutered ‘unlimited’ storage, even if I was in the percent which probably used the least storage. I just liked having the option. You can’t call them on the phone. They don’t protect email privacy. Their translate used to be my go to also. It’s not improved in years despite people crowdsourcing improved translation. It’s just a pile of enshittified crap. Worse than it was before.

I mean yeah… if he had a solution they would be actually have the revolutionary AI tool the tech writers write about.

It’s kinda written like a “gotcha” but it’s really the fundamental problem with AI. We call it hallucinations now but a few years ago we just called it being wrong or returning bad results.

It’s like saying we have teleportation working in that we can vaporize you on the spot but are just struggling to reconstruct you elsewhere. “It’s halfway there!”

Until the AI is trustworthy enough to not require fact checking it afterwards it’s just a toy.

Then fucking turn it off

If its job is to write a fan fic on what may or may not be true on what you asked for, then it does a great job. But typically people search for information, and getting what is essentially a glorified auto complete isn’t useful. It’s like big tech has learned nothing about the massive issue of disinformation and just added fuel to the fire to an unsolved problem we’re still very much trying to figure out.

You mean hallucinations like this one?