The research from Purdue University, first spotted by news outlet Futurism, was presented earlier this month at the Computer-Human Interaction Conference in Hawaii and looked at 517 programming questions on Stack Overflow that were then fed to ChatGPT.

“Our analysis shows that 52% of ChatGPT answers contain incorrect information and 77% are verbose,” the new study explained. “Nonetheless, our user study participants still preferred ChatGPT answers 35% of the time due to their comprehensiveness and well-articulated language style.”

Disturbingly, programmers in the study didn’t always catch the mistakes being produced by the AI chatbot.

“However, they also overlooked the misinformation in the ChatGPT answers 39% of the time,” according to the study. “This implies the need to counter misinformation in ChatGPT answers to programming questions and raise awareness of the risks associated with seemingly correct answers.”

You have no idea how many times I mentioned this observation from my own experience and people attacked me like I called their baby ugly

ChatGPT in its current form is good help, but nowhere ready to actually replace anyone

A lot of firms are trying to outsource their dev work overseas to communities of non-English speakers, and then handing the result off to a tiny support team.

ChatGPT lets the cheap low skill workers churn out miles of spaghetti code in short order, creating the illusion of efficiency for people who don’t know (or care) what they’re buying.

Yeah it’s wrong a lot but as a developer, damn it’s useful. I use Gemini for asking questions and Copilot in my IDE personally, and it’s really good at doing mundane text editing bullshit quickly and writing boilerplate, which is a massive time saver. Gemini has at least pointed me in the right direction with quite obscure issues or helped pinpoint the cause of hidden bugs many times. I treat it like an intelligent rubber duck rather than expecting it to just solve everything for me outright.

That’s a good way to use it. Like every technological evolution it comes with risks and downsides. But if you are aware of that and know how to use it, it can be a useful tool.

And as always, it only gets better over time. One day we will probably rely more heavily on such AI tools, so it’s a good idea to adapt quickly.

Who would have thought that an artificial intelligence trained on human intelligence would be just as dumb

Hm. This is what I got.

I think about 90% of the screenshots we see of LLMs failing hilariously are doctored. Lemmy users really want to believe it’s that bad through.

Edit:

Holy fuck did it just pass the Turing test?

Yes there are mistakes, but if you direct it to the right direction, it can give you correct answers

In my experience, if you have the necessary skills to point it at the right direction, you don’t need to use it at the first place

So we should all live alone in the woods in shacks we built for ourselves, wearing the pelts of random animals we caught and ate?

Just because I have the skills to live like a savage doesn’t mean I want to. Hell, even the idea of glamping sounds awful to me.

No thanks, I will use modern technology to ease my life just as much as I can.That actually sounds awesome sign me up

Bruh, where in my comment did I tell people not to use it?

you don’t need to use it at the first place

Bad reading comprehension is bad.

Drunk posting is sad.

“you don’t need to use it” ≠ “do not use it”

GPT-2 came out a little more than 5 years ago, it answered 0% of questions accurately and couldn’t string a sentence together.

GPT-3 came out a little less than 4 years ago and was kind of a neat party trick, but I’m pretty sure answered ~0% of programming questions correctly.

GPT-4 came out a little less than 2 years ago and can answer 48% of programming questions accurately.

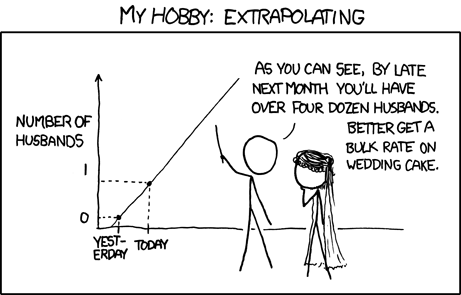

I’m not talking about mortality, or creativity, or good/bad for humanity, but if you don’t see a trajectory here, I don’t know what to tell you.

Seeing the trajectory is not ultimate answer to anything.

I appreciate the XKCD comic, but I think you’re exaggerating that other commenter’s intent.

The tech has been improving, and there’s no obvious reason to assume that we’ve reached the peak already. Nor is the other commenter saying we went from 0 to 1 and so now we’re going to see something 400x as good.

I appreciate the XKCD comic, but I think you’re exaggerating that other commenter’s intent.

i don’t think so. the other commenter clearly rejects the critic(1) and implies that existence of upward trajectory means it will one day overcome the problem(2).

while (1) is well documented fact right now, (2) is just wishful thinking right now.

hence the comic, because “the trajectory” doesn’t really mean anything.

In general, “The technology is young and will get better with time” is not just a reasonable argument, but almost a consistent pattern. Note that XKCD’s example is about events, not technology. The comic would be relevant if someone were talking about events happening, or something like sales, but not about technology.

Here, I’m not saying that you’re necessarily right or they’re necessarily wrong, just that the comic you shared is not a good fit.

In general, “The technology is young and will get better with time” is not just a reasonable argument, but almost a consistent pattern. Note that XKCD’s example is about events, not technology.

yeah, no.

try to compare horse speed with ford t and blindly extrapolate that into the future. look at the moore’s law. technology does not just grow upwards if you give it enough time, most of it has some kind of limit.

and it is not out of realm of possibility that llms, having already stolen all of human knowledge from the internet, having found it is not enough and spewing out bullshit as a result of that monumental theft, have already reached it.

that may not be the case for every machine learning tool developed for some specific purpose, but blind assumption it will just grow indiscriminately, because “there is a trend”, is overly optimistic.

I don’t think continuing further would be fruitful. I imagine your stance is heavily influenced by your opposition to, or dislike of, AI/LLMs

oh sure. when someone says “you can’t just blindly extrapolate a curve”, there must be some conspiracy behind it, it absolutely cannot be because you can’t just blindly extrapolate a curve 😂

Perhaps there is some line between assuming infinite growth and declaring that this technology that is not quite good enough right now will therefore never be good enough?

Blindly assuming no further technological advancements seems equally as foolish to me as assuming perpetual exponential growth. Ironically, our ability to extrapolate from limited information is a huge part of human intelligence that AI hasn’t solved yet.

will therefore never be good enough?

no one said that. but someone did try to reject the fact it is demonstrably bad right now, because “there is a trajectory”.

That comes off as disingenuous in this instance.

Speaking at a Bloomberg event on the sidelines of the World Economic Forum’s annual meeting in Davos, Altman said the silver lining is that more climate-friendly sources of energy, particularly nuclear fusion or cheaper solar power and storage, are the way forward for AI.

“There’s no way to get there without a breakthrough,” he said. “It motivates us to go invest more in fusion.”

It’s a good trajectory, but when you have people running these companies saying that we need “energy breakthroughs” to power something that gives more accurate answers in the face of a world that’s already experiencing serious issues arising from climate change…

It just seems foolhardy if we have to burn the planet down to get to 80% accuracy.

I’m glad Altman is at least promoting nuclear, but at the same time, he has his fingers deep in a nuclear energy company, so it’s not like this isn’t something he might be pushing because it benefits him directly. He’s not promoting nuclear because he cares about humanity, he’s promoting nuclear because has deep investment in nuclear energy. That seems like just one more capitalist trying to corner the market for themselves.

The study is using 3.5, not version 4.

“Major new Technology still in Infancy Needs Improvements”

– headline every fucking day

“Will this technology save us from ourselves, or are we just jerking off?”

So this issue for me is this:

If these technologies still require large amounts of human intervention to make them usable then why are we expending so much energy on solutions that still require human intervention to make them usable?

Why not skip the burning the planet to a crisp for half-formed technology that can’t give consistent results and instead just pay people a living fucking wage to do the job in the first place?

Seriously, one of the biggest jokes in computer science is that debugging other people’s code gives you worse headaches than migraines.

So now we’re supposed to dump insane amounts of money and energy (as in burning fossil fuels and needing so much energy they’re pushing for a nuclear resurgence) into a tool that results in… having to debug other people’s code?

They’ve literally turned all of programming into the worst aspect of programming for barely any fucking improvement over just letting humans do it.

Why do we think it’s important to burn the planet to a crisp in pursuit of this when humans can already fucking make art and code? Especially when we still need humans to fix the fucking AIs work to make it functionally usable. That’s still a lot of fucking work expected of humans for a “tool” that’s demanding more energy sources than currently exists.

They don’t require as much human intervention to make the results usable as would be required if the tool didn’t exist at all.

Compilers produce machine code, but require human intervention to write the programs that they compile to machine code. Are compilers useless wastes of energy?

Compilers are deterministic and you can reason about how they came to their results, and because of that they are useful.

There is a good chance that it is instrumental in discoveries that lead to efficient clean energy. It’s not as if we were at some super clean, unabused planet before language models came along. We have needed help for quite some time. Almost nobody wants to change their own habits(meat, cars, planes, constant AC and heat…), so we need something. Maybe AI will help in this endevour like it has at so many other things.

There is a good chance that it is instrumental in discoveries that lead to efficient clean energy

There is exactly zero chance… LLMs don’t discover anything, they just remix already existing information. That is how it works.

This is a common misunderstanding of what it means to discover new things. New things are just remixing old things. For example, AI has discovered new matrix multiplications, protein foldings, drugs, chess/go/poker strategies, and much more that are all far superior to anything humans have ever come up with in these fields. In all these cases, the AI was just combining old things in new ways. Even Einstein was just combining old things into new ways. There is exactly zero chance that AI will all of a sudden quit making new discoveries all of a sudden.

It’s also “discovered” multitudes more that are complete nonsense.

Just a slight correction. ML/AI has aided in all sorts of discoveries, GenAI is a “remixing of existing concepts”. I don’t believe I’ve read, nor does the underlying principles really enable, anything regarding GenAI and discovering new ways to do things.

For example, AI has discovered

no, people have discovered. llms were just a tool used to manipulate large sets of data (instructed and trained by people for the specific task) which is something in which computers are obviously better than people. but same as we don’t say “keyboard made a discovery”, the llm didn’t make a discovery either.

that is just intentionally misleading, as is calling the technology “artificial intelligence”, because there is absolutely no intelligence whatsoever.

and comparing that to einstein is just laughable. einstein understood the broad context and principles and applied them creatively. llm doesn’t understand anything. it is more like a toddler watching its father shave and then moving a lego piece accross its face pretending to shave as well, without really understaning what is shaving.

I didn’t say LLMs made these discoveries. They didn’t. AI made those discoveries. Yes, it is true that humans made AI, so in a way, humans made the discoveries, but if that is your take, then it is impossible for AI to ever make any discovery. Really, if we take this way of thinking to its natural conclusion, then even humans can never make discoveries, only the universe can make discoveries, since humans are a result of the universe “universing”. It is arbitrary to try to credit humans with anything that happens further down their evolution.

Humans tried for a long time to get good at chess, and AI came along and made the absolute best chess players utterly irrelevant even if we give a team of the worlds best chessplayers an endless clock and thr AI a single minute for the entire game. That was 20 years ago. This is happening in more and more fields and showing no sign of stopping. We don’t know yet if discoveries will come from future LLMs like theybm have from other forms of AI, but we do know that with each generation more and more complex patterns are being identified and utilized by LLMs. 3 years ago the best LLMs would have scored single digits on IQ test, now they are triple digits, it is laughable to think that anyone knows where the current rapid trajectory will stop for this new technology, and much more laughable to think we are already at the end.

AI made those discoveries. Yes, it is true that humans made AI, so in a way, humans made the discoveries, but if that is your take, then it is impossible for AI to ever make any discovery.

if this is your take, then lot of keyboard made a lot of discovery.

AI could make a discovery if there was one (ai). there is none at the moment, and there won’t be any for any foreseeable future.

tool that can generate statistically probable text without really understanding meaning of the words is not an intelligence in any sense of the word.

your other examples, like playing chess, is just applying the computers to brute-force through specific mundane task, which is obviously something computers are good at and being used since we have them, but again, does not constitute a thinking, or intelligence, in any way.

it is laughable to think that anyone knows where the current rapid trajectory will stop for this new technology, and much more laughable to think we are already at the end.

it is also laughable to assume it will just continue indefinitely, because “there is a trajectory”. lot of technology have some kind of limit.

and just to clarify, i am not some anti-computer get back to trees type. i am eager to see what machine learning models will bring in the field of evidence based medicine, for example, which is something where humans notoriously suck. but i will still not call it “intelligence” or “thinking”, or “making a discovery”. i will call it synthetizing so much data that would be humanly impossible and finding a pattern in it, and i will consider it cool result, no matter what we call it.

if this is your take, then lot of keyboard made a lot of discovery.

This is literally my point. It is arbitrary to choose that all the good ideas came from “humans”. If we are going to give all credit for anything AI produces to humans, then it only seems fair to give all credit for human things to our common ancestors with chimpanzees, because if it were not for their clever ideas, we would never have been here. But wait, we can’t stop there, because we have to give credit to the original single-celled life forms, and eventually, back to the universe itself(like I mentioned before).

Look, I totally get the desire to want to glorify humans and think that we have something special that machines don’t/can’t have. It kinda sucks to think that we are not so special, and potentially extememly inferior to what is right around the corner. We can’t let that primal ego desire cloud our judgement, though. Our brains are physical machines doing calculations. There is not some magical difference between our calculations that make it so we can make discoveries and machines cannot.

Imagine you teach your little brother how to play chess, and then your brother thinks about it a bunch and comes up with a bunch of new strategies and starts to kick your butt every time, and eventually atatts crushing tournaments. Sure, you can cling to the fact that you taught him how to play, and you can go around telling everyone how “you” are winning all these tournaments because your brother is actually winning them, but it doesn’t change the fact that your brother is the one with the secret sauce that you simply are unable to comprehend.

Your whole point is that if people do it, then it is some special discovery thing, but if computers do it, then it is just computational brute force. There is actually no difference between the two, it is just two different ways of wording the same process. We made programs that could understand the rules, and then it went further and in the same direction that we were trying to go.

So far as continuing indefinitely because we are on a trajectory goes, sure, we will eventually hit some intelligence plateaus, but we are nowhere near this point. Why can I say this with such certainty? Because we have things that we know will work that we haven’t gotten around to combining yet. Some of this gets a bit technical, but a nice way to think of it is this. Right now, we are mainly using hardware designed to generate general graphics that we have hijacked to use for machine learning. The usual speedup when we go from using generalized hardware to specialized is about 5 orders of magnitude(10,000x). That kind of a gain has huge implications in the AI/ML world. This is just one out of many known improvements on the horizon, but it is one of the simplest to wrap your head around. I don’t know how familiar you are with things like crewAI or autogen, but they are phenomenal, they absolutely crush all of the greatest base LLMs, but they are still a bit slow due to how many LLM calls they take. When we have a 10,000x speedup(which is pretty much guarenteed), then everyone will be able to instantly use enormous agent frameworks like this in an instant.

I understand wanting to see humans as having a monopoly on “intelligence”, but quite frankly that era is coming to an end. It may be a bumpy ride, but the sooner humans learn to adjust to this new world, the better. I don’t think it is something that someone can really make someone else see, but once you do see it, it is very obvious. I suggest you check out the cutting-edge agent stuff out there and then imagine that the most impressive stuff will be routinely done from a single prompt in an instant. Then, on top of that, consider that the base LLMs that we have now are the worst there will ever be. We are in for a very wild ride.

People down vote me when I point this out in response to “AI will take our jobs” doomerism.

Even if AI is able to answer all questions 100% accurately, it wouldn’t mean much either way. Most of programming is making adjustments to old code while ensuring nothing breaks. Gonna be a while before AI will be able to do that reliably.

C-suites:

tHis iS inCReDibLe! wE cAn SavE sO MUcH oN sTafFiNg cOStS!