- cross-posted to:

- news@lemmy.world

- cross-posted to:

- news@lemmy.world

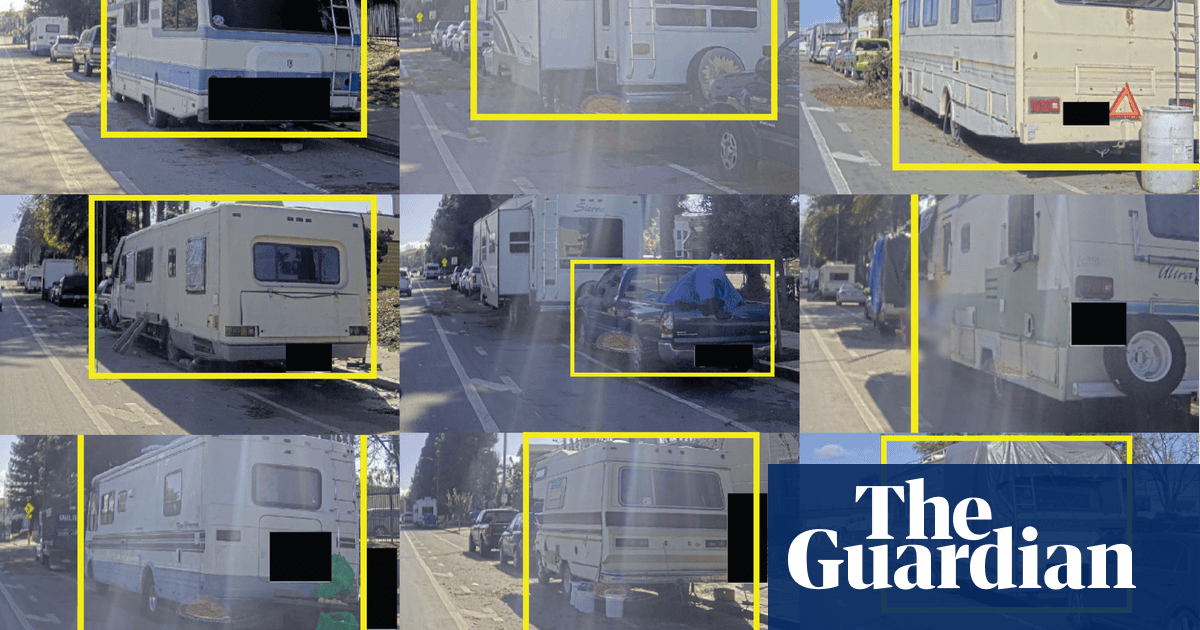

Last July, San Jose issued an open invitation to technology companies to mount cameras on a municipal vehicle that began periodically driving through the city’s district 10 in December, collecting footage of the streets and public spaces. The images are fed into computer vision software and used to train the companies’ algorithms to detect the unwanted objects, according to interviews and documents the Guardian obtained through public records requests.

This might actually get struck down on constitutionality. How does one confront their accuser in court if the accuser is a trained neural net?

And that’s without even touching on the fact that ML is stochastic in nature, and should absolutely not be considered accurate enough to be an unsupervised and unmoderated single-point-of-failure decision engine in contexts like legal, medical, or other critical decision-making process. The fact that ML regularly and demonstrably hallucinates (or otherwise yields garbage output) is just not acceptable in a regulatory sense.

Source: software engineer in biotech; we are specifically disallowed from using ML at any level in our work for the above reasons, as well as potential HIPAA-related data mining issues.

I don’t know much about jurisprudence, but wouldn’t the neural net be a tool of the person that brought the lawsuit.

Like if you get brought in due to DNA, you don’t have to face the centrifuge that helped extract your DNA from the sample?

You’re ignoring the fact that using such a failure-prone system to initiate legal proceedings against a citizen is ABSOLUTELY going to overload an already overloaded system. And that’s not even going into the fact that it puts an unjust burden on those falsely accused, or the fact that it’s targeting a segment of the population that’s a lot more likely to go “fuck it, I don’t care, how could things possibly get worse” (read: serious depression, PTSD, other neurodivergences that often correlate with being unhoused). This is by-design.

This is an all-around grade-A shit policy. It’s also a policy designed to treat the symptom instead of the cause. It will make the streets around San Jose look a bit nicer, and in doing so it will harm a lot of people.

I mean I’m not ignoring those facts. I prefaced by saying I don’t know much about jurisprudence.

Thanks for providing some insight though.

For what it’s worth, I didn’t intend to come off stabby or dismissive